A hyper-realistic and highly detailed image of a super tiny, extremely cute and slightly chubby eagle standing on the palm of a human hand. The eagle has soft, large round eyes, and tiny claws. The hand is gently cupped with natural tanned skin texture and lighting, showing scale and contrast between the eagle tiny size and the human hand. The background is softly blurred to focus attention on the eagle with warm, natural lighting that adds depth and realism.

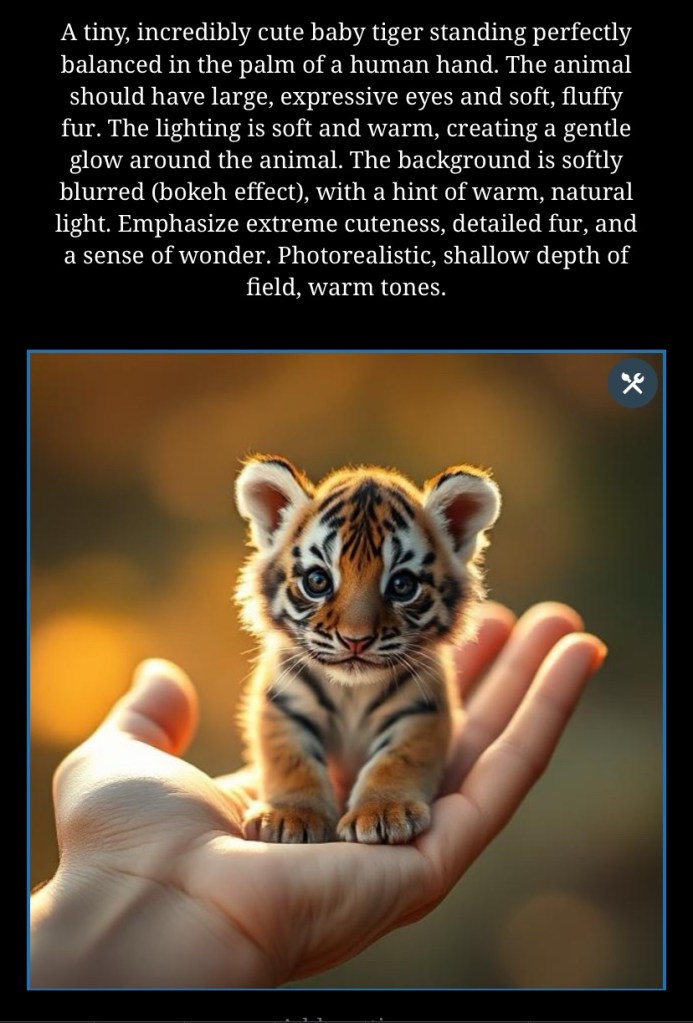

Prompt:

A tiny, incredibly cute [animal, e.g., baby elephant, fox cub, kitten, puppy] standing perfectly balanced in the palm of a human hand. The animal should have large, expressive eyes and soft, fluffy fur. The lighting is soft and warm, creating a gentle glow around the animal. The background is softly blurred (bokeh effect), with a hint of warm, natural light. Emphasize extreme cuteness, detailed fur, and a sense of wonder. Photorealistic, shallow depth of field, warm tones.

A tiny, incredibly cute baby puppy standing perfectly balanced in the palm of a human hand. The animal should have large, expressive eyes and soft, fluffy fur. The lighting is soft and warm, creating a gentle glow around the animal. The background is softly blurred (bokeh effect), with a hint of warm, natural light. Emphasize extreme cuteness, detailed fur, and a sense of wonder. Photorealistic, shallow depth of field, warm tones.

A tiny, incredibly cute baby koala-bear standing perfectly balanced in the palm of a human hand. The animal should have large, expressive eyes and soft, fluffy fur. The lighting is soft and warm, creating a gentle glow around the animal. The background is softly blurred (bokeh effect), with a hint of warm, natural light. Emphasize extreme cuteness, detailed fur, and a sense of wonder. Photorealistic, shallow depth of field, warm tones.

Google Gemini summarize dissertation on standard digital image generating capabilities

2025

The Evolution of Digital Image Generation: From Pixels to Prompts

Historically, digital image generation was a laborious, manual process. Artists and designers used software like Photoshop to create images pixel by pixel, layer by layer, or through 3D modeling programs that required significant technical skill. While these methods offered immense control, they were time-consuming and often required specialized expertise.

The “new generation” of digital image generation, primarily driven by advancements in Artificial Intelligence, has revolutionized this landscape, making image creation faster, more accessible, and capable of producing results that were once unimaginable.

Early AI Explorations: The Seeds of Creativity

The journey of AI in image creation began decades ago with fundamental research into neural networks. Early attempts focused on pattern recognition and basic image manipulation. Key milestones include:

- Neural Networks (1950s onwards): Initial research laid the groundwork for AI to understand and process visual data.

- Generative Adversarial Networks (GANs) (2014 onwards): This was a pivotal breakthrough. Ian Goodfellow’s work on GANs introduced a “competitive” model where two neural networks – a generator and a discriminator – learn to create and distinguish realistic images. The generator creates images, and the discriminator tries to tell if they are real or fake. This continuous feedback loop allowed GANs to produce increasingly realistic and novel visuals. While a huge leap, GANs sometimes struggled with training stability and “mode collapse,” where they would produce a limited variety of outputs.

The New Generation: Diffusion Models and Beyond

The true paradigm shift in the “new generation” of digital image generation has been the rise of Diffusion Models. These models have largely surpassed GANs in terms of image quality, diversity, and ease of training, becoming the dominant force in text-to-image synthesis.

How Diffusion Models Work (Simplified):

Imagine an image. A diffusion model works in two phases: - Forward Diffusion (Adding Noise): The model is trained by taking real images and gradually adding random “noise” to them, step by step, until the image becomes pure static. This process helps the AI understand the underlying structure of images and how different elements relate to each other, even when obscured by noise.

- Reverse Diffusion (Denoising): The core learning task is to reverse this process. The model learns to predict and remove the noise at each step, essentially learning how to reconstruct a clear image from pure noise.

To generate a new image, the model starts with random noise and then iteratively “denoises” it, guided by the patterns it learned from its training data and any input conditions (like a text prompt). This process allows for incredible detail, coherent compositions, and a high degree of control.

Key Capabilities and Advancements of the New Generation (Diffusion Models): - Unprecedented Photorealism: Modern diffusion models can generate images that are virtually indistinguishable from real photographs, capturing intricate details, textures, and realistic lighting.

- Text-to-Image Synthesis: This is the most revolutionary aspect. Users can simply type descriptive text prompts (e.g., “a tiny, incredibly cute prehistoric baby sabertooth-tiger standing perfectly balanced in the palm of a human hand”) and the AI generates a corresponding image. This democratizes image creation, allowing anyone to be a “creator” without traditional artistic skills.

- Broader Adaptability of Styles: Beyond photorealism, these models can generate images in a vast array of artistic styles, including cartoon, watercolor, oil painting, sketch, fantasy, sci-fi, and more. They can even blend different style elements.

- Increased Resolution and Detail: The generated images boast higher resolutions with clearer and richer details, allowing for finer textures, patterns, and color transitions.

- Sophisticated Light and Shadow Effects: The processing of light and shadow is highly advanced, simulating realistic illumination, reflections, and shadows, leading to a stronger sense of depth and dimensionality.

- Image Editing and Manipulation: Beyond generating from scratch, these models can also perform powerful image editing tasks like:

- Inpainting: Filling in missing or selected areas of an image based on the surrounding context or a prompt.

- Outpainting: Expanding an image beyond its original borders, intelligently generating new content that seamlessly extends the scene.

- Style Transfer: Applying the artistic style of one image to the content of another.

- Image-to-Image Transformation: Transforming an existing image based on a new prompt or stylistic input.

- Multi-Turn Generation and Instruction Following: Advanced models, like OpenAI’s GPT-4o, can now refine images through natural conversation, building upon previous generations and maintaining consistency across iterations. They can also follow highly detailed and complex prompts with remarkable accuracy, handling multiple objects and their relationships.

- Multimodal Capabilities: Some systems can take both text and image inputs, allowing for even more nuanced control and creative possibilities. For example, using an uploaded image as visual inspiration for a new generation.

- Speed and Efficiency: While early diffusion models could be computationally intensive, ongoing research is leading to faster generation times, with some frameworks simplifying the multi-step diffusion process into a single step while retaining quality.

Impact and Implications

The “new generation” of digital image generation has profound impacts across various fields: - Democratization of Creativity: It has lowered the barrier to entry for content creation, enabling artists, designers, marketers, and even everyday users to produce high-quality visuals.

- Accelerated Content Production: Businesses can rapidly generate images for marketing, product design, game development, and more, significantly reducing production time and costs.

- New Artistic Mediums: AI image generation opens up entirely new avenues for artistic expression, allowing creators to explore concepts and styles previously impossible or impractical.

- Enhanced Accessibility: For individuals with disabilities, these tools can help them visualize ideas or create images where traditional methods might be challenging.

- Challenges and Ethical Considerations: Along with its benefits, this technology also raises important questions:

- Copyright and Intellectual Property: How do we define ownership when AI generates images based on vast datasets of existing works?

- Deepfakes and Misinformation: The ability to generate hyper-realistic images can be misused to create misleading or harmful content.

- Job Displacement: Concerns exist about the potential impact on traditional creative professions.

- Bias in Training Data: If the training data reflects existing biases, the AI models can perpetuate and amplify them in the generated images.

The Future of Digital Image Generation

The field is still rapidly evolving. We can expect to see: - Even Greater Realism and Control: Models will become more nuanced in their understanding of prompts and their ability to generate precise details.

- Seamless Integration: AI image generation will be more deeply integrated into existing creative software and workflows.

- Personalization: More sophisticated models that can learn and adapt to individual user styles and preferences.

- Multimodal and Multidimensional Generation: Further advancements in generating not just static images, but also videos, 3D models, and even interactive virtual environments from text or other inputs.

- Improved Explainability and Controllability: Greater transparency into how AI models generate images, giving users more fine-tuned control over the creative process.

In conclusion, the evolution of digital image generation, particularly with the advent of diffusion models, represents a monumental leap. It has transformed from a niche technical skill to a broadly accessible and powerful creative tool, empowering individuals and industries alike, while also prompting critical discussions about ethics and societal impact. The “new generation” is not just about creating images; it’s about redefining the very nature of visual communication and creativity in the digital age.

https://photos.app.goo.gl/uy5c6x1JTHogLq7u6

Discover more from America The Beautiful

Subscribe to get the latest posts sent to your email.